mirror of

https://github.com/arch3rPro/1Panel-Appstore.git

synced 2026-04-14 16:07:13 +08:00

feat: add apps OpenDeepWiki

This commit is contained in:

@@ -274,6 +274,15 @@

|

||||

</td>

|

||||

<td width="33%" align="center">

|

||||

|

||||

<a href="./apps/opendeepwiki/README.md">

|

||||

<img src="./apps/opendeepwiki/logo.png" width="60" height="60" alt="OpenDeepWiki">

|

||||

<br><b>OpenDeepWiki</b>

|

||||

</a>

|

||||

|

||||

AI驱动的开源代码知识库与文档协作平台,支持多模型、多数据库、智能文档生成

|

||||

|

||||

<kbd>latest</kbd> • [官网链接](https://opendeep.wiki/)

|

||||

|

||||

</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

225

apps/opendeepwiki/README.md

Normal file

225

apps/opendeepwiki/README.md

Normal file

@@ -0,0 +1,225 @@

|

||||

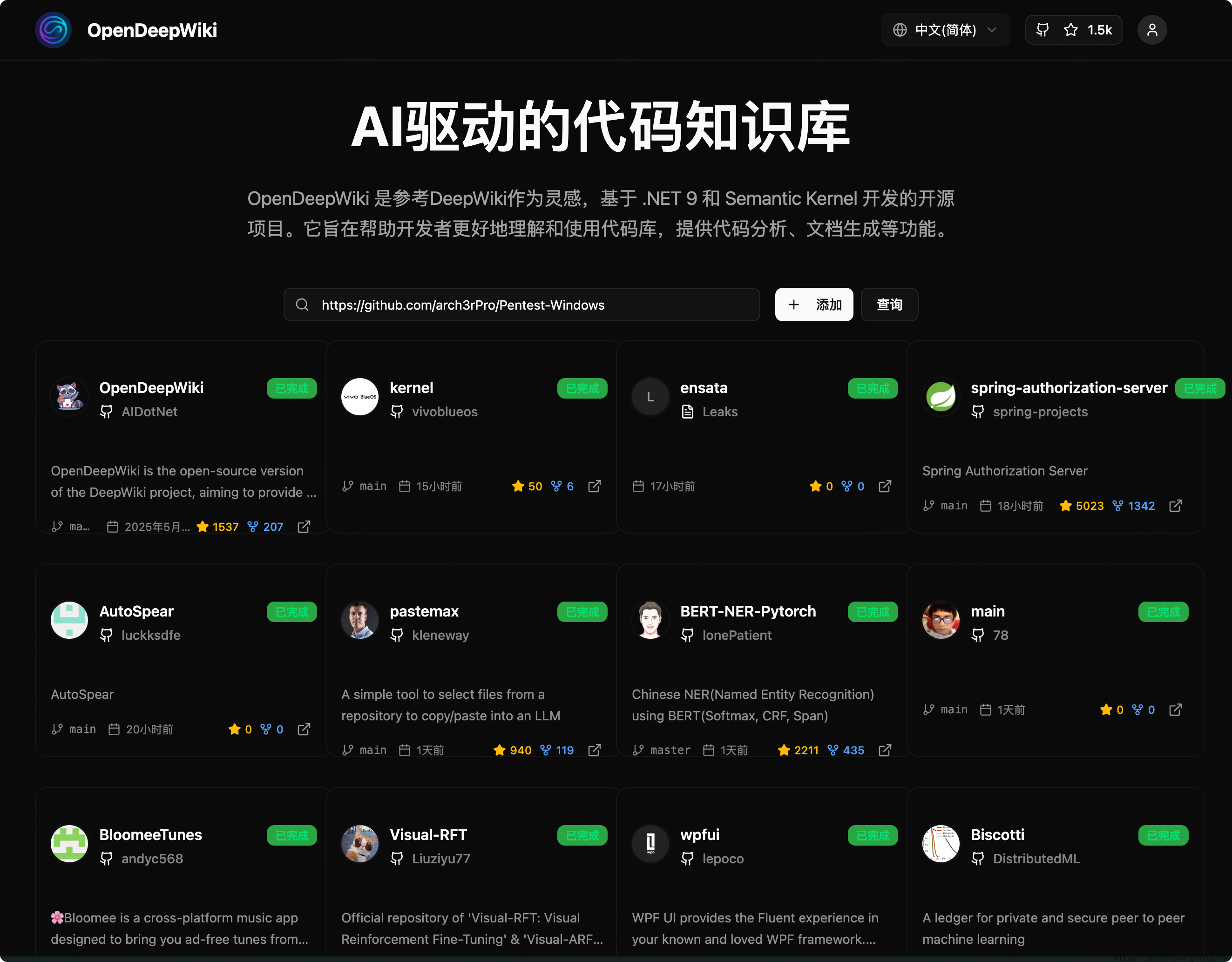

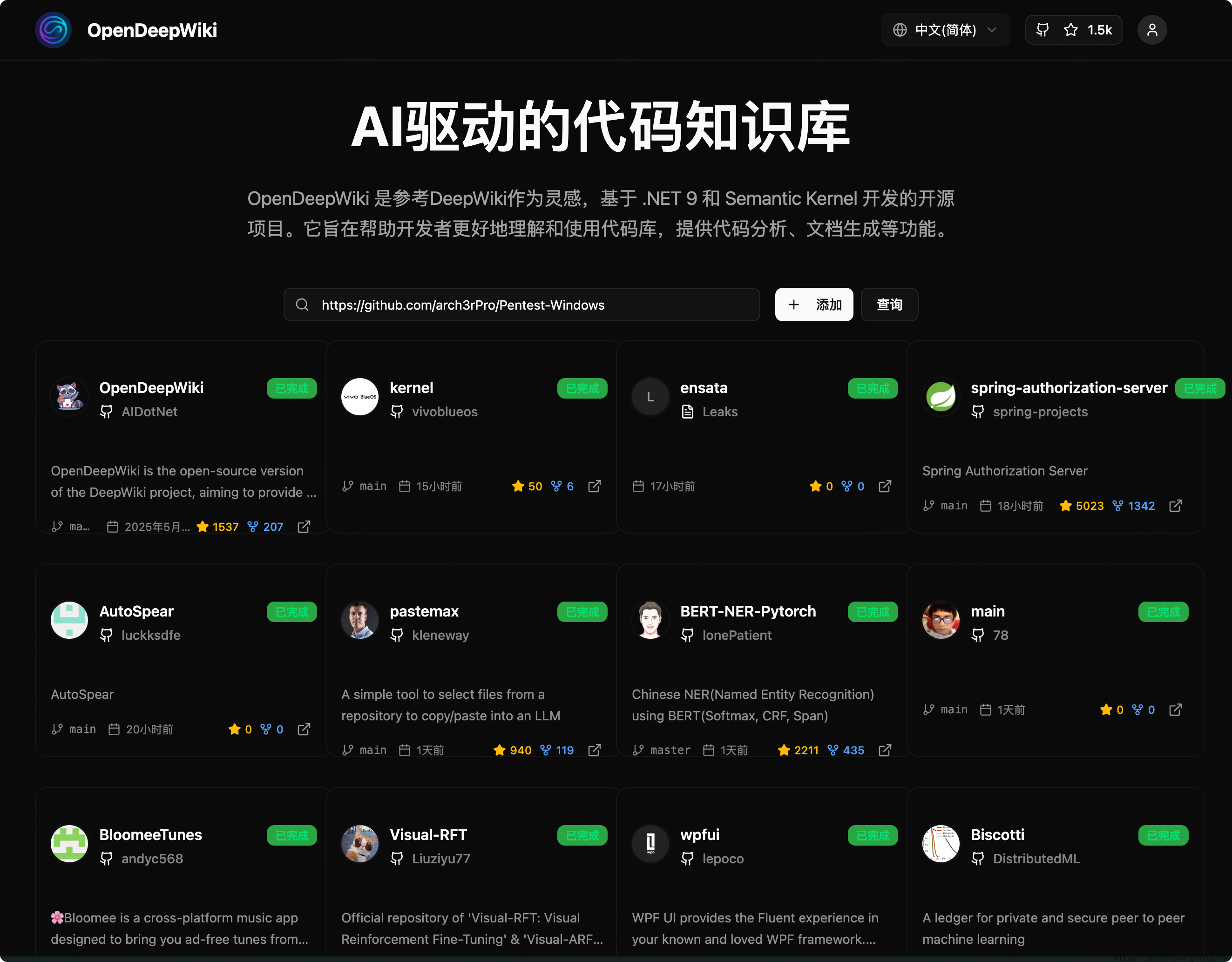

# OpenDeepWiki

|

||||

|

||||

AI驱动的代码知识库

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### 功能 Features

|

||||

- **快速转换**:支持将所有GitHub、GitLab、Gitee、Gitea等代码仓库几分钟内转换为知识库。

|

||||

- **多语言支持**:支持所有编程语言的代码分析和文档生成。

|

||||

- **代码结构图**:自动生成Mermaid图,帮助理解代码结构。

|

||||

- **自定义模型支持**:支持自定义模型和自定义API,灵活扩展。

|

||||

- **AI智能分析**:基于AI进行代码分析和代码关系理解。

|

||||

- **SEO友好**:基于Next.js生成SEO友好型文档和知识库,方便搜索引擎抓取。

|

||||

- **对话式交互**:支持与AI对话,获取代码详细信息和使用方法,深入理解代码。

|

||||

|

||||

|

||||

### 特性清单 Feature List

|

||||

|

||||

- [x] 支持多代码仓库(GitHub、GitLab、Gitee、Gitea等)

|

||||

- [x] 支持多编程语言(Python、Java、C#、JavaScript等)

|

||||

- [x] 支持仓库管理(增删改查仓库)

|

||||

- [x] 支持多AI提供商(OpenAI、AzureOpenAI、Anthropic等)

|

||||

- [x] 支持多数据库(SQLite、PostgreSQL、SqlServer、MySQL等)

|

||||

- [x] 支持多语言(中文、英文、法文等)

|

||||

- [x] 支持上传ZIP文件和本地文件

|

||||

- [x] 提供数据微调平台,生成微调数据集

|

||||

- [x] 支持目录级仓库管理,动态生成目录和文档

|

||||

- [x] 支持仓库目录修改管理

|

||||

- [x] 支持用户管理(增删改查用户)

|

||||

- [x] 支持用户权限管理

|

||||

- [x] 支持仓库级别生成不同微调框架数据集

|

||||

|

||||

---

|

||||

|

||||

### 项目介绍 Project Introduction

|

||||

|

||||

OpenDeepWiki是一个开源项目,灵感来源于[DeepWiki](https://deepwiki.com/),基于.NET 9和Semantic Kernel开发。旨在帮助开发者更好地理解和利用代码库,提供代码分析、文档生成和知识图谱构建等功能。

|

||||

|

||||

主要功能:

|

||||

|

||||

- 分析代码结构

|

||||

- 理解仓库核心概念

|

||||

- 生成代码文档

|

||||

- 自动生成代码的README.md

|

||||

- 支持MCP(Model Context Protocol)协议

|

||||

|

||||

---

|

||||

|

||||

### MCP支持

|

||||

|

||||

OpenDeepWiki支持MCP协议:

|

||||

|

||||

- 可以作为单仓库的MCPServer,进行仓库分析。

|

||||

|

||||

示例配置:

|

||||

|

||||

```json

|

||||

{

|

||||

"mcpServers": {

|

||||

"OpenDeepWiki":{

|

||||

"url": "http://Your OpenDeepWiki service IP:port/sse?owner=AIDotNet&name=OpenDeepWiki"

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

- owner:仓库所属组织或拥有者名称

|

||||

- name:仓库名称

|

||||

|

||||

添加仓库后,可通过提问测试,如“什么是OpenDeepWiki?”,效果如下图所示:

|

||||

|

||||

|

||||

|

||||

这样,OpenDeepWiki可作为MCPServer,供其他AI模型调用,方便分析和理解开源项目。

|

||||

|

||||

---

|

||||

|

||||

### 🚀 快速开始 Quick Start

|

||||

|

||||

1. 克隆仓库

|

||||

|

||||

```bash

|

||||

git clone https://github.com/AIDotNet/OpenDeepWiki.git

|

||||

cd OpenDeepWiki

|

||||

```

|

||||

|

||||

2. 修改 `docker-compose.yml` 中环境变量配置:

|

||||

|

||||

- OpenAI示例:

|

||||

|

||||

```yaml

|

||||

services:

|

||||

koalawiki:

|

||||

environment:

|

||||

- KOALAWIKI_REPOSITORIES=/repositories

|

||||

- TASK_MAX_SIZE_PER_USER=5 # AI每用户最大并行文档生成任务数

|

||||

- CHAT_MODEL=DeepSeek-V3 # 模型需支持函数调用

|

||||

- ANALYSIS_MODEL= # 用于生成仓库目录结构的分析模型

|

||||

- CHAT_API_KEY= # 你的API Key

|

||||

- LANGUAGE= # 默认生成语言,如“Chinese”

|

||||

- ENDPOINT=https://api.token-ai.cn/v1

|

||||

- DB_TYPE=sqlite

|

||||

- MODEL_PROVIDER=OpenAI # 模型提供商,支持OpenAI、AzureOpenAI、Anthropic

|

||||

- DB_CONNECTION_STRING=Data Source=/data/KoalaWiki.db

|

||||

- EnableSmartFilter=true # 是否启用智能过滤,影响AI获取仓库文件目录能力

|

||||

- UPDATE_INTERVAL # 仓库增量更新间隔,单位天

|

||||

- MAX_FILE_LIMIT=100 # 上传文件最大限制,单位MB

|

||||

- DEEP_RESEARCH_MODEL= # 深度研究模型,空则使用CHAT_MODEL

|

||||

- ENABLE_INCREMENTAL_UPDATE=true # 是否启用增量更新

|

||||

- ENABLE_CODED_DEPENDENCY_ANALYSIS=false # 是否启用代码依赖分析,可能影响代码质量

|

||||

- ENABLE_WAREHOUSE_COMMIT=true # 是否启用仓库提交

|

||||

- ENABLE_FILE_COMMIT=true # 是否启用文件提交

|

||||

- REFINE_AND_ENHANCE_QUALITY=true # 是否精炼并提高质量

|

||||

- ENABLE_WAREHOUSE_FUNCTION_PROMPT_TASK=true # 是否启用仓库功能提示任务

|

||||

- ENABLE_WAREHOUSE_DESCRIPTION_TASK=true # 是否启用仓库描述任务

|

||||

- CATALOGUE_FORMAT=compact # 目录结构格式 (compact, json, pathlist, unix)

|

||||

- ENABLE_CODE_COMPRESSION=false # 是否启用代码压缩

|

||||

```

|

||||

|

||||

- AzureOpenAI和Anthropic配置类似,仅需调整 `ENDPOINT` 和 `MODEL_PROVIDER`。

|

||||

|

||||

### 数据库配置

|

||||

|

||||

#### SQLite (默认)

|

||||

```yaml

|

||||

- DB_TYPE=sqlite

|

||||

- DB_CONNECTION_STRING=Data Source=/data/KoalaWiki.db

|

||||

```

|

||||

|

||||

#### PostgreSQL

|

||||

```yaml

|

||||

- DB_TYPE=postgres

|

||||

- DB_CONNECTION_STRING=Host=localhost;Database=KoalaWiki;Username=postgres;Password=password

|

||||

```

|

||||

|

||||

#### SQL Server

|

||||

```yaml

|

||||

- DB_TYPE=sqlserver

|

||||

- DB_CONNECTION_STRING=Server=localhost;Database=KoalaWiki;Trusted_Connection=true;

|

||||

```

|

||||

|

||||

### MySQL

|

||||

```yaml

|

||||

- DB_TYPE=mysql

|

||||

- DB_CONNECTION_STRING=Server=localhost;Database=KoalaWiki;Uid=root;Pwd=password;

|

||||

```

|

||||

|

||||

### 🔍 工作原理 How It Works

|

||||

|

||||

OpenDeepWiki利用AI实现:

|

||||

|

||||

- 克隆代码仓库本地

|

||||

- 读取.gitignore配置,忽略无关文件

|

||||

- 递归扫描目录获取所有文件和目录

|

||||

- 判断文件数量是否超过阈值,超过则调用AI模型智能过滤目录

|

||||

- 解析AI返回的目录JSON数据

|

||||

- 生成或更新README.md

|

||||

- 调用AI模型生成仓库分类信息与项目总览

|

||||

- 清理项目分析标签内容并保存项目总览到数据库

|

||||

- 调用AI生成思考目录(任务列表)

|

||||

- 递归处理目录任务,生成文档目录结构

|

||||

- 保存目录结构到数据库

|

||||

- 处理未完成的文档任务

|

||||

- 若为Git仓库,清理旧提交记录,调用AI生成更新日志并保存

|

||||

|

||||

---

|

||||

|

||||

### OpenDeepWiki 仓库解析成文档详细流程图

|

||||

|

||||

```mermaid

|

||||

graph TD

|

||||

A[克隆代码仓库] --> B[读取.gitignore配置忽略文件]

|

||||

B --> C[递归扫描目录获取所有文件和目录]

|

||||

C --> D{文件数量是否超过阈值}

|

||||

D -- 否 --> E[直接返回目录结构]

|

||||

D -- 是 --> F[调用AI模型进行目录结构智能过滤]

|

||||

F --> G[解析AI返回的目录JSON数据]

|

||||

E --> G

|

||||

G --> H[生成或更新README.md]

|

||||

H --> I[调用AI模型生成仓库分类信息]

|

||||

I --> J[调用AI模型生成项目总览信息]

|

||||

J --> K[清理项目分析标签内容]

|

||||

K --> L[保存项目总览到数据库]

|

||||

L --> M[调用AI生成思考目录任务列表]

|

||||

M --> N[递归处理目录任务,生成DocumentCatalog]

|

||||

N --> O[保存目录结构到数据库]

|

||||

O --> P[处理未完成的文档任务]

|

||||

P --> Q{仓库类型是否为Git}

|

||||

Q -- 是 --> R[清理旧的提交记录]

|

||||

R --> S[调用AI生成更新日志]

|

||||

S --> T[保存更新日志到数据库]

|

||||

Q -- 否 --> T

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### 高级配置 Advanced Configuration

|

||||

|

||||

#### 环境变量 Environment Variables

|

||||

|

||||

- `KOALAWIKI_REPOSITORIES`:仓库存储路径

|

||||

- `TASK_MAX_SIZE_PER_USER`:AI每用户最大并行文档生成任务数

|

||||

- `CHAT_MODEL`:聊天模型(需支持函数调用)

|

||||

- `ENDPOINT`:API端点

|

||||

- `ANALYSIS_MODEL`:用于生成仓库目录结构的分析模型

|

||||

- `CHAT_API_KEY`:API密钥

|

||||

- `LANGUAGE`:生成文档语言

|

||||

- `DB_TYPE`:数据库类型,支持sqlite、postgres、sqlserver、mysql(默认:sqlite)

|

||||

- `MODEL_PROVIDER`:模型提供商,默认OpenAI,支持AzureOpenAI、Anthropic

|

||||

- `DB_CONNECTION_STRING`:数据库连接字符串

|

||||

- `EnableSmartFilter`:是否启用智能过滤,影响AI获取仓库目录能力

|

||||

- `UPDATE_INTERVAL`:仓库增量更新间隔(天)

|

||||

- `MAX_FILE_LIMIT`:上传文件最大限制(MB)

|

||||

- `DEEP_RESEARCH_MODEL`:深度研究模型,空则使用CHAT_MODEL

|

||||

- `ENABLE_INCREMENTAL_UPDATE`:是否启用增量更新

|

||||

- `ENABLE_CODED_DEPENDENCY_ANALYSIS`:是否启用代码依赖分析,可能影响代码质量

|

||||

- `ENABLE_WAREHOUSE_COMMIT`:是否启用仓库提交

|

||||

- `ENABLE_FILE_COMMIT`:是否启用文件提交

|

||||

- `REFINE_AND_ENHANCE_QUALITY`:是否精炼并提高质量

|

||||

- `ENABLE_WAREHOUSE_FUNCTION_PROMPT_TASK`:是否启用仓库功能提示任务

|

||||

- `ENABLE_WAREHOUSE_DESCRIPTION_TASK`:是否启用仓库描述任务

|

||||

- `CATALOGUE_FORMAT`:目录结构格式 (compact, json, pathlist, unix)

|

||||

- `ENABLE_CODE_COMPRESSION`:是否启用代码压缩

|

||||

210

apps/opendeepwiki/README_en.md

Normal file

210

apps/opendeepwiki/README_en.md

Normal file

@@ -0,0 +1,210 @@

|

||||

# OpenDeepWiki

|

||||

|

||||

AI-driven Code Knowledge Base

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### Features

|

||||

- **Quick Conversion**: Convert all code repositories (GitHub, GitLab, Gitee, Gitea, etc.) into knowledge bases within minutes.

|

||||

- **Multi-language Support**: Analyze and generate documentation for all programming languages.

|

||||

- **Code Structure Diagrams**: Automatically generate Mermaid diagrams to help understand code structure.

|

||||

- **Custom Model Support**: Support for custom models and APIs for flexible extension.

|

||||

- **AI Intelligent Analysis**: AI-based code analysis and relationship understanding.

|

||||

- **SEO Friendly**: Generates SEO-friendly documentation and knowledge bases based on Next.js.

|

||||

- **Conversational Interaction**: Chat with AI to get detailed code information and usage.

|

||||

|

||||

### Feature List

|

||||

- [x] Multiple code repositories (GitHub, GitLab, Gitee, Gitea, etc.)

|

||||

- [x] Multiple programming languages (Python, Java, C#, JavaScript, etc.)

|

||||

- [x] Repository management (CRUD)

|

||||

- [x] Multiple AI providers (OpenAI, AzureOpenAI, Anthropic, etc.)

|

||||

- [x] Multiple databases (SQLite, PostgreSQL, SqlServer, MySQL, etc.)

|

||||

- [x] Multiple languages (Chinese, English, French, etc.)

|

||||

- [x] Upload ZIP and local files

|

||||

- [x] Data fine-tuning platform

|

||||

- [x] Directory-level repository management

|

||||

- [x] Repository directory modification

|

||||

- [x] User management (CRUD)

|

||||

- [x] User permission management

|

||||

- [x] Generate fine-tuning datasets for different frameworks

|

||||

|

||||

---

|

||||

|

||||

### Project Introduction

|

||||

|

||||

OpenDeepWiki is an open-source project inspired by [DeepWiki](https://deepwiki.com/), developed with .NET 9 and Semantic Kernel. It helps developers better understand and utilize code repositories, providing code analysis, documentation generation, and knowledge graph construction.

|

||||

|

||||

Main features:

|

||||

- Analyze code structure

|

||||

- Understand repository core concepts

|

||||

- Generate code documentation

|

||||

- Automatically generate README.md for code

|

||||

- Support MCP (Model Context Protocol)

|

||||

|

||||

---

|

||||

|

||||

### MCP Support

|

||||

|

||||

OpenDeepWiki supports the MCP protocol:

|

||||

- Can serve as a single repository MCPServer for repository analysis.

|

||||

|

||||

Example configuration:

|

||||

```json

|

||||

{

|

||||

"mcpServers": {

|

||||

"OpenDeepWiki":{

|

||||

"url": "http://Your OpenDeepWiki service IP:port/sse?owner=AIDotNet&name=OpenDeepWiki"

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

- owner: Repository organization or owner name

|

||||

- name: Repository name

|

||||

|

||||

After adding the repository, you can test by asking questions like "What is OpenDeepWiki?", as shown below:

|

||||

|

||||

|

||||

|

||||

This way, OpenDeepWiki can serve as an MCPServer for other AI models to call, facilitating analysis and understanding of open-source projects.

|

||||

|

||||

---

|

||||

|

||||

### 🚀 Quick Start

|

||||

|

||||

1. Clone the repository

|

||||

```bash

|

||||

git clone https://github.com/AIDotNet/OpenDeepWiki.git

|

||||

cd OpenDeepWiki

|

||||

```

|

||||

|

||||

2. Modify environment variables in `docker-compose.yml`:

|

||||

- OpenAI example:

|

||||

```yaml

|

||||

services:

|

||||

koalawiki:

|

||||

environment:

|

||||

- KOALAWIKI_REPOSITORIES=/repositories

|

||||

- TASK_MAX_SIZE_PER_USER=5 # Maximum parallel document generation tasks per user for AI

|

||||

- CHAT_MODEL=DeepSeek-V3 # Model must support function calling

|

||||

- ANALYSIS_MODEL= # Analysis model for generating repository directory structure

|

||||

- CHAT_API_KEY= # Your API Key

|

||||

- LANGUAGE= # Default generation language, e.g., "Chinese"

|

||||

- ENDPOINT=https://api.token-ai.cn/v1

|

||||

- DB_TYPE=sqlite

|

||||

- MODEL_PROVIDER=OpenAI # Model provider, supports OpenAI, AzureOpenAI, Anthropic

|

||||

- DB_CONNECTION_STRING=Data Source=/data/KoalaWiki.db

|

||||

- EnableSmartFilter=true # Whether to enable smart filtering, affects AI's ability to get repository file directories

|

||||

- UPDATE_INTERVAL # Repository incremental update interval in days

|

||||

- MAX_FILE_LIMIT=100 # Maximum upload file limit in MB

|

||||

- DEEP_RESEARCH_MODEL= # Deep research model, if empty uses CHAT_MODEL

|

||||

- ENABLE_INCREMENTAL_UPDATE=true # Whether to enable incremental updates

|

||||

- ENABLE_CODED_DEPENDENCY_ANALYSIS=false # Whether to enable code dependency analysis, may affect code quality

|

||||

- ENABLE_WAREHOUSE_COMMIT=true # Whether to enable warehouse commit

|

||||

- ENABLE_FILE_COMMIT=true # Whether to enable file commit

|

||||

- REFINE_AND_ENHANCE_QUALITY=true # Whether to refine and enhance quality

|

||||

- ENABLE_WAREHOUSE_FUNCTION_PROMPT_TASK=true # Whether to enable warehouse function prompt task

|

||||

- ENABLE_WAREHOUSE_DESCRIPTION_TASK=true # Whether to enable warehouse description task

|

||||

- CATALOGUE_FORMAT=compact # Directory structure format (compact, json, pathlist, unix)

|

||||

- ENABLE_CODE_COMPRESSION=false # Whether to enable code compression

|

||||

```

|

||||

- AzureOpenAI and Anthropic configurations are similar, just adjust `ENDPOINT` and `MODEL_PROVIDER`.

|

||||

|

||||

### Database Configuration

|

||||

#### SQLite (Default)

|

||||

```yaml

|

||||

- DB_TYPE=sqlite

|

||||

- DB_CONNECTION_STRING=Data Source=/data/KoalaWiki.db

|

||||

```

|

||||

#### PostgreSQL

|

||||

```yaml

|

||||

- DB_TYPE=postgres

|

||||

- DB_CONNECTION_STRING=Host=localhost;Database=KoalaWiki;Username=postgres;Password=password

|

||||

```

|

||||

#### SQL Server

|

||||

```yaml

|

||||

- DB_TYPE=sqlserver

|

||||

- DB_CONNECTION_STRING=Server=localhost;Database=KoalaWiki;Trusted_Connection=true;

|

||||

```

|

||||

#### MySQL

|

||||

```yaml

|

||||

- DB_TYPE=mysql

|

||||

- DB_CONNECTION_STRING=Server=localhost;Database=KoalaWiki;Uid=root;Pwd=password;

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### How It Works

|

||||

|

||||

OpenDeepWiki leverages AI to:

|

||||

- Clone code repository locally

|

||||

- Read .gitignore configuration to ignore irrelevant files

|

||||

- Recursively scan directories to get all files and directories

|

||||

- Determine if file count exceeds threshold; if so, call AI model for intelligent directory filtering

|

||||

- Parse AI-returned directory JSON data

|

||||

- Generate or update README.md

|

||||

- Call AI model to generate repository classification information and project overview

|

||||

- Clean project analysis tag content and save project overview to database

|

||||

- Call AI to generate thinking directory (task list)

|

||||

- Recursively process directory tasks to generate document directory structure

|

||||

- Save directory structure to database

|

||||

- Process incomplete document tasks

|

||||

- If Git repository, clean old commit records, call AI to generate update log and save

|

||||

|

||||

---

|

||||

|

||||

### OpenDeepWiki Repository Parsing to Documentation Detailed Flow Chart

|

||||

|

||||

```mermaid

|

||||

graph TD

|

||||

A[Clone code repository] --> B[Read .gitignore configuration to ignore files]

|

||||

B --> C[Recursively scan directories to get all files and directories]

|

||||

C --> D{Does file count exceed threshold?}

|

||||

D -- No --> E[Directly return directory structure]

|

||||

D -- Yes --> F[Call AI model for intelligent directory structure filtering]

|

||||

F --> G[Parse AI-returned directory JSON data]

|

||||

E --> G

|

||||

G --> H[Generate or update README.md]

|

||||

H --> I[Call AI model to generate repository classification information]

|

||||

I --> J[Call AI model to generate project overview information]

|

||||

J --> K[Clean project analysis tag content]

|

||||

K --> L[Save project overview to database]

|

||||

L --> M[Call AI to generate thinking directory task list]

|

||||

M --> N[Recursively process directory tasks to generate DocumentCatalog]

|

||||

N --> O[Save directory structure to database]

|

||||

O --> P[Process incomplete document tasks]

|

||||

P --> Q{Is repository type Git?}

|

||||

Q -- Yes --> R[Clean old commit records]

|

||||

R --> S[Call AI to generate update log]

|

||||

S --> T[Save update log to database]

|

||||

Q -- No --> T

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Advanced Configuration

|

||||

#### Environment Variables

|

||||

- `KOALAWIKI_REPOSITORIES`: Repository storage path

|

||||

- `TASK_MAX_SIZE_PER_USER`: Maximum parallel document generation tasks per user for AI

|

||||

- `CHAT_MODEL`: Chat model (must support function calling)

|

||||

- `ENDPOINT`: API endpoint

|

||||

- `ANALYSIS_MODEL`: Analysis model for generating repository directory structure

|

||||

- `CHAT_API_KEY`: API key

|

||||

- `LANGUAGE`: Document generation language

|

||||

- `DB_TYPE`: Database type, supports sqlite, postgres, sqlserver, mysql (default: sqlite)

|

||||

- `MODEL_PROVIDER`: Model provider, default OpenAI, supports AzureOpenAI, Anthropic

|

||||

- `DB_CONNECTION_STRING`: Database connection string

|

||||

- `EnableSmartFilter`: Whether to enable smart filtering, affects AI's ability to get repository directories

|

||||

- `UPDATE_INTERVAL`: Repository incremental update interval (days)

|

||||

- `MAX_FILE_LIMIT`: Maximum upload file limit (MB)

|

||||

- `DEEP_RESEARCH_MODEL`: Deep research model, if empty uses CHAT_MODEL

|

||||

- `ENABLE_INCREMENTAL_UPDATE`: Whether to enable incremental updates

|

||||

- `ENABLE_CODED_DEPENDENCY_ANALYSIS`: Whether to enable code dependency analysis, may affect code quality

|

||||

- `ENABLE_WAREHOUSE_COMMIT`: Whether to enable warehouse commit

|

||||

- `ENABLE_FILE_COMMIT`: Whether to enable file commit

|

||||

- `REFINE_AND_ENHANCE_QUALITY`: Whether to refine and enhance quality

|

||||

- `ENABLE_WAREHOUSE_FUNCTION_PROMPT_TASK`: Whether to enable warehouse function prompt task

|

||||

- `ENABLE_WAREHOUSE_DESCRIPTION_TASK`: Whether to enable warehouse description task

|

||||

- `CATALOGUE_FORMAT`: Directory structure format (compact, json, pathlist, unix)

|

||||

- `ENABLE_CODE_COMPRESSION`: Whether to enable code compression

|

||||

28

apps/opendeepwiki/data.yml

Normal file

28

apps/opendeepwiki/data.yml

Normal file

@@ -0,0 +1,28 @@

|

||||

name: OpenDeepWiki

|

||||

tags:

|

||||

- 知识库

|

||||

- AI

|

||||

title: 开源AI知识库与文档协作平台

|

||||

description: OpenDeepWiki 是一款支持多模型、多数据库、智能文档生成的开源AI知识库系统,适合企业和个人搭建智能文档与知识协作平台。

|

||||

additionalProperties:

|

||||

key: opendeepwiki

|

||||

name: OpenDeepWiki

|

||||

tags:

|

||||

- KnowledgeBase

|

||||

- AI

|

||||

type: website

|

||||

crossVersionUpdate: true

|

||||

limit: 0

|

||||

recommend: 80

|

||||

shortDescZh: 开源AI知识库与文档协作平台

|

||||

shortDescEn: Open-source AI-powered knowledge base and documentation platform

|

||||

website: https://opendeep.wiki/

|

||||

github: https://github.com/AIDotNet/OpenDeepWiki

|

||||

document: https://github.com/AIDotNet/OpenDeepWiki#readme

|

||||

architectures:

|

||||

- amd64

|

||||

- arm64

|

||||

memoryRequired: 1024

|

||||

description:

|

||||

zh: OpenDeepWiki 是一款支持多模型、多数据库、智能文档生成的开源AI知识库系统,适合企业和个人搭建智能文档与知识协作平台。

|

||||

en: OpenDeepWiki is an open-source AI-powered knowledge base and documentation platform, supporting multi-model, multi-database, and intelligent document generation for teams and individuals.

|

||||

33

apps/opendeepwiki/latest/data.yml

Normal file

33

apps/opendeepwiki/latest/data.yml

Normal file

@@ -0,0 +1,33 @@

|

||||

additionalProperties:

|

||||

formFields:

|

||||

- default: 8090

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelZh: Web 端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "https://your-api-endpoint/v1"

|

||||

edit: true

|

||||

envKey: ENDPOINT

|

||||

labelZh: API接口地址

|

||||

required: true

|

||||

type: text

|

||||

- default: "your-api-key"

|

||||

edit: true

|

||||

envKey: CHAT_API_KEY

|

||||

labelZh: API Key

|

||||

required: true

|

||||

type: password

|

||||

- default: "DeepSeek-V3"

|

||||

edit: true

|

||||

envKey: CHAT_MODEL

|

||||

labelZh: 对话模型

|

||||

required: true

|

||||

type: text

|

||||

- default: ""

|

||||

edit: true

|

||||

envKey: ANALYSIS_MODEL

|

||||

labelZh: 分析模型

|

||||

required: true

|

||||

type: text

|

||||

76

apps/opendeepwiki/latest/docker-compose.yml

Normal file

76

apps/opendeepwiki/latest/docker-compose.yml

Normal file

@@ -0,0 +1,76 @@

|

||||

services:

|

||||

opendeepwiki:

|

||||

image: crpi-j9ha7sxwhatgtvj4.cn-shenzhen.personal.cr.aliyuncs.com/koala-ai/koala-wiki

|

||||

container_name: ${CONTAINER_NAME}

|

||||

restart: always

|

||||

environment:

|

||||

- KOALAWIKI_REPOSITORIES=/repositories

|

||||

- TASK_MAX_SIZE_PER_USER=5 # 每个用户AI处理文档生成的最大数量

|

||||

- REPAIR_MERMAID=1 # 是否进行Mermaid修复,1修复,其余不修复

|

||||

- CHAT_MODEL=${CHAT_MODEL} # 必须要支持function的模型

|

||||

- ANALYSIS_MODEL=${ANALYSIS_MODEL} # 分析模型,用于生成仓库目录结构,这个很重要,模型越强,生成的目录结构越好,为空则使用ChatModel

|

||||

# 分析模型建议使用GPT-4.1 , CHAT模型可以用其他模型生成文档,以节省 token 开销

|

||||

- CHAT_API_KEY=${CHAT_API_KEY} #您的APIkey

|

||||

- LANGUAGE= # 设置生成语言默认为“中文”, 英文可以填写 English 或 英文

|

||||

- ENDPOINT=${ENDPOINT}

|

||||

- DB_TYPE=sqlite

|

||||

- DB_CONNECTION_STRING=Data Source=/data/KoalaWiki.db

|

||||

- UPDATE_INTERVAL=5 # 仓库增量更新间隔,单位天

|

||||

- EnableSmartFilter=true # 是否启用智能过滤,这可能影响AI得到仓库的文件目录

|

||||

- ENABLE_INCREMENTAL_UPDATE=true # 是否启用增量更新

|

||||

- ENABLE_CODED_DEPENDENCY_ANALYSIS=false # 是否启用代码依赖分析?这可能会对代码的质量产生影响。

|

||||

- ENABLE_WAREHOUSE_FUNCTION_PROMPT_TASK=false # 是否启用MCP Prompt生成

|

||||

- ENABLE_WAREHOUSE_DESCRIPTION_TASK=false # 是否启用仓库Description生成

|

||||

- OTEL_SERVICE_NAME=koalawiki

|

||||

- OTEL_EXPORTER_OTLP_PROTOCOL=grpc

|

||||

- OTEL_EXPORTER_OTLP_ENDPOINT=http://aspire-dashboard:18889

|

||||

volumes:

|

||||

- ./repositories:/app/repositories

|

||||

- ./data:/data

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

||||

opendeepwiki-web:

|

||||

image: crpi-j9ha7sxwhatgtvj4.cn-shenzhen.personal.cr.aliyuncs.com/koala-ai/koala-wiki-web

|

||||

container_name: ${CONTAINER_NAME}-web

|

||||

restart: always

|

||||

environment:

|

||||

- NEXT_PUBLIC_API_URL=http://opendeepwiki:8080 # 用于提供给server的地址

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

||||

nginx: # 需要nginx将前端和后端代理到一个端口

|

||||

image: crpi-j9ha7sxwhatgtvj4.cn-shenzhen.personal.cr.aliyuncs.com/koala-ai/nginx:alpine

|

||||

container_name: ${CONTAINER_NAME}-nginx

|

||||

restart: always

|

||||

ports:

|

||||

- ${PANEL_APP_PORT_HTTP}:80

|

||||

volumes:

|

||||

- ./nginx/nginx.conf:/etc/nginx/conf.d/default.conf

|

||||

depends_on:

|

||||

- opendeepwiki

|

||||

- opendeepwiki-web

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

||||

aspire-dashboard:

|

||||

image: mcr.microsoft.com/dotnet/aspire-dashboard

|

||||

container_name: ${CONTAINER_NAME}-aspire-dashboard

|

||||

restart: always

|

||||

environment:

|

||||

- TZ=Asia/Shanghai

|

||||

- Dashboard:ApplicationName=Aspire

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

37

apps/opendeepwiki/latest/nginx/nginx.conf

Normal file

37

apps/opendeepwiki/latest/nginx/nginx.conf

Normal file

@@ -0,0 +1,37 @@

|

||||

server {

|

||||

listen 80;

|

||||

server_name localhost;

|

||||

|

||||

# 设置上传文件大小限制为 100MB

|

||||

client_max_body_size 100M;

|

||||

|

||||

# 日志配置

|

||||

access_log /var/log/nginx/access.log;

|

||||

error_log /var/log/nginx/error.log;

|

||||

|

||||

# 代理所有 /api/ 请求到后端服务

|

||||

location /api/ {

|

||||

proxy_pass http://opendeepwiki:8080/api/;

|

||||

proxy_http_version 1.1;

|

||||

proxy_set_header Upgrade $http_upgrade;

|

||||

proxy_set_header Connection 'upgrade';

|

||||

proxy_set_header Host $host;

|

||||

proxy_set_header X-Real-IP $remote_addr;

|

||||

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

|

||||

proxy_set_header X-Forwarded-Proto $scheme;

|

||||

proxy_cache_bypass $http_upgrade;

|

||||

}

|

||||

|

||||

# 其他所有请求转发到前端服务

|

||||

location / {

|

||||

proxy_pass http://opendeepwiki-web:3000;

|

||||

proxy_http_version 1.1;

|

||||

proxy_set_header Upgrade $http_upgrade;

|

||||

proxy_set_header Connection 'upgrade';

|

||||

proxy_set_header Host $host;

|

||||

proxy_set_header X-Real-IP $remote_addr;

|

||||

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

|

||||

proxy_set_header X-Forwarded-Proto $scheme;

|

||||

proxy_cache_bypass $http_upgrade;

|

||||

}

|

||||

}

|

||||

BIN

apps/opendeepwiki/logo.png

Normal file

BIN

apps/opendeepwiki/logo.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 537 KiB |

Reference in New Issue

Block a user