mirror of

https://github.com/arch3rPro/1Panel-Appstore.git

synced 2026-04-14 16:07:13 +08:00

feat: 添加 Next AI Draw.io 应用及相关配置

This commit is contained in:

17

README.md

17

README.md

@@ -668,6 +668,23 @@ AI驱动的开源代码知识库与文档协作平台,支持多模型、多数

|

|||||||

</tr>

|

</tr>

|

||||||

</table>

|

</table>

|

||||||

|

|

||||||

|

<table>

|

||||||

|

<tr>

|

||||||

|

<td width="33%" align="center">

|

||||||

|

|

||||||

|

<a href="./apps/next-ai-draw-io/README.md">

|

||||||

|

<img src="./apps/next-ai-draw-io/logo.png" width="60" height="60" alt="Next-AI-Draw-IO">

|

||||||

|

<br><b>Next AI Draw.io</b>

|

||||||

|

</a>

|

||||||

|

|

||||||

|

🤖 AI驱动的图表创建工具

|

||||||

|

|

||||||

|

<kbd>0.4.1</kbd> • [官网链接](https://next-ai-drawio.jiang.jp/)

|

||||||

|

|

||||||

|

</td>

|

||||||

|

</tr>

|

||||||

|

</table>

|

||||||

|

|

||||||

#### 🎵 多媒体管理

|

#### 🎵 多媒体管理

|

||||||

|

|

||||||

<table>

|

<table>

|

||||||

|

|||||||

93

apps/next-ai-draw-io/0.4.1/.env.local

Normal file

93

apps/next-ai-draw-io/0.4.1/.env.local

Normal file

@@ -0,0 +1,93 @@

|

|||||||

|

# AI Provider Configuration

|

||||||

|

# AI_PROVIDER: Which provider to use

|

||||||

|

# Options: bedrock, openai, anthropic, google, azure, ollama, openrouter, deepseek, siliconflow

|

||||||

|

# Default: bedrock

|

||||||

|

AI_PROVIDER=bedrock

|

||||||

|

|

||||||

|

# AI_MODEL: The model ID for your chosen provider (REQUIRED)

|

||||||

|

AI_MODEL=global.anthropic.claude-sonnet-4-5-20250929-v1:0

|

||||||

|

|

||||||

|

# AWS Bedrock Configuration

|

||||||

|

# AWS_REGION=us-east-1

|

||||||

|

# AWS_ACCESS_KEY_ID=your-access-key-id

|

||||||

|

# AWS_SECRET_ACCESS_KEY=your-secret-access-key

|

||||||

|

# Note: Claude and Nova models support reasoning/extended thinking

|

||||||

|

# BEDROCK_REASONING_BUDGET_TOKENS=12000 # Optional: Claude reasoning budget in tokens (1024-64000)

|

||||||

|

# BEDROCK_REASONING_EFFORT=medium # Optional: Nova reasoning effort (low/medium/high)

|

||||||

|

|

||||||

|

# OpenAI Configuration

|

||||||

|

# OPENAI_API_KEY=sk-...

|

||||||

|

# OPENAI_BASE_URL=https://api.openai.com/v1 # Optional: Custom OpenAI-compatible endpoint

|

||||||

|

# OPENAI_ORGANIZATION=org-... # Optional

|

||||||

|

# OPENAI_PROJECT=proj_... # Optional

|

||||||

|

# Note: o1/o3/gpt-5 models automatically enable reasoning summary (default: detailed)

|

||||||

|

# OPENAI_REASONING_EFFORT=low # Optional: Reasoning effort (minimal/low/medium/high) - for o1/o3/gpt-5

|

||||||

|

# OPENAI_REASONING_SUMMARY=detailed # Optional: Override reasoning summary (none/brief/detailed)

|

||||||

|

|

||||||

|

# Anthropic (Direct) Configuration

|

||||||

|

# ANTHROPIC_API_KEY=sk-ant-...

|

||||||

|

# ANTHROPIC_BASE_URL=https://your-custom-anthropic/v1

|

||||||

|

# ANTHROPIC_THINKING_TYPE=enabled # Optional: Anthropic extended thinking (enabled)

|

||||||

|

# ANTHROPIC_THINKING_BUDGET_TOKENS=12000 # Optional: Budget for extended thinking in tokens

|

||||||

|

|

||||||

|

# Google Generative AI Configuration

|

||||||

|

# GOOGLE_GENERATIVE_AI_API_KEY=...

|

||||||

|

# GOOGLE_BASE_URL=https://generativelanguage.googleapis.com/v1beta # Optional: Custom endpoint

|

||||||

|

# GOOGLE_CANDIDATE_COUNT=1 # Optional: Number of candidates to generate

|

||||||

|

# GOOGLE_TOP_K=40 # Optional: Top K sampling parameter

|

||||||

|

# GOOGLE_TOP_P=0.95 # Optional: Nucleus sampling parameter

|

||||||

|

# Note: Gemini 2.5/3 models automatically enable reasoning display (includeThoughts: true)

|

||||||

|

# GOOGLE_THINKING_BUDGET=8192 # Optional: Gemini 2.5 thinking budget in tokens (for more/less thinking)

|

||||||

|

# GOOGLE_THINKING_LEVEL=high # Optional: Gemini 3 thinking level (low/high)

|

||||||

|

|

||||||

|

# Azure OpenAI Configuration

|

||||||

|

# Configure endpoint using ONE of these methods:

|

||||||

|

# 1. AZURE_RESOURCE_NAME - SDK constructs: https://{name}.openai.azure.com/openai/v1{path}

|

||||||

|

# 2. AZURE_BASE_URL - SDK appends /v1{path} to your URL

|

||||||

|

# If both are set, AZURE_BASE_URL takes precedence.

|

||||||

|

# AZURE_RESOURCE_NAME=your-resource-name

|

||||||

|

# AZURE_API_KEY=...

|

||||||

|

# AZURE_BASE_URL=https://your-resource.openai.azure.com/openai # Alternative: Custom endpoint

|

||||||

|

# AZURE_REASONING_EFFORT=low # Optional: Azure reasoning effort (low, medium, high)

|

||||||

|

# AZURE_REASONING_SUMMARY=detailed

|

||||||

|

|

||||||

|

# Ollama (Local) Configuration

|

||||||

|

# OLLAMA_BASE_URL=http://localhost:11434/api # Optional, defaults to localhost

|

||||||

|

# OLLAMA_ENABLE_THINKING=true # Optional: Enable thinking for models that support it (e.g., qwen3)

|

||||||

|

|

||||||

|

# OpenRouter Configuration

|

||||||

|

# OPENROUTER_API_KEY=sk-or-v1-...

|

||||||

|

# OPENROUTER_BASE_URL=https://openrouter.ai/api/v1 # Optional: Custom endpoint

|

||||||

|

|

||||||

|

# DeepSeek Configuration

|

||||||

|

# DEEPSEEK_API_KEY=sk-...

|

||||||

|

# DEEPSEEK_BASE_URL=https://api.deepseek.com/v1 # Optional: Custom endpoint

|

||||||

|

|

||||||

|

# SiliconFlow Configuration (OpenAI-compatible)

|

||||||

|

# Base domain can be .com or .cn, defaults to https://api.siliconflow.com/v1

|

||||||

|

# SILICONFLOW_API_KEY=sk-...

|

||||||

|

# SILICONFLOW_BASE_URL=https://api.siliconflow.com/v1 # Optional: switch to https://api.siliconflow.cn/v1 if needed

|

||||||

|

|

||||||

|

# Langfuse Observability (Optional)

|

||||||

|

# Enable LLM tracing and analytics - https://langfuse.com

|

||||||

|

# LANGFUSE_PUBLIC_KEY=pk-lf-...

|

||||||

|

# LANGFUSE_SECRET_KEY=sk-lf-...

|

||||||

|

# LANGFUSE_BASEURL=https://cloud.langfuse.com # EU region, use https://us.cloud.langfuse.com for US

|

||||||

|

|

||||||

|

# Temperature (Optional)

|

||||||

|

# Controls randomness in AI responses. Lower = more deterministic.

|

||||||

|

# Leave unset for models that don't support temperature (e.g., GPT-5.1 reasoning models)

|

||||||

|

# TEMPERATURE=0

|

||||||

|

|

||||||

|

# Access Control (Optional)

|

||||||

|

# ACCESS_CODE_LIST=your-secret-code,another-code

|

||||||

|

|

||||||

|

# Draw.io Configuration (Optional)

|

||||||

|

# NEXT_PUBLIC_DRAWIO_BASE_URL=https://embed.diagrams.net # Default: https://embed.diagrams.net

|

||||||

|

# Use this to point to a self-hosted draw.io instance

|

||||||

|

|

||||||

|

# PDF Input Feature (Optional)

|

||||||

|

# Enable PDF file upload to extract text and generate diagrams

|

||||||

|

# Enabled by default. Set to "false" to disable.

|

||||||

|

# ENABLE_PDF_INPUT=true

|

||||||

|

# NEXT_PUBLIC_MAX_EXTRACTED_CHARS=150000 # Max characters for PDF/text extraction (default: 150000)

|

||||||

10

apps/next-ai-draw-io/0.4.1/data.yml

Normal file

10

apps/next-ai-draw-io/0.4.1/data.yml

Normal file

@@ -0,0 +1,10 @@

|

|||||||

|

additionalProperties:

|

||||||

|

formFields:

|

||||||

|

- default: "3000"

|

||||||

|

envKey: PANEL_APP_PORT_HTTP

|

||||||

|

required: true

|

||||||

|

type: number

|

||||||

|

labelEn: Port

|

||||||

|

labelZh: 应用端口

|

||||||

|

edit: true

|

||||||

|

rule: paramPort

|

||||||

25

apps/next-ai-draw-io/0.4.1/docker-compose.yml

Normal file

25

apps/next-ai-draw-io/0.4.1/docker-compose.yml

Normal file

@@ -0,0 +1,25 @@

|

|||||||

|

services:

|

||||||

|

next-ai-draw-io:

|

||||||

|

image: ghcr.io/dayuanjiang/next-ai-draw-io:0.4.1

|

||||||

|

ports:

|

||||||

|

- ${PANEL_APP_PORT_HTTP}:3000

|

||||||

|

depends_on:

|

||||||

|

- drawio

|

||||||

|

env_file: .env.local

|

||||||

|

container_name: ${CONTAINER_NAME}

|

||||||

|

networks:

|

||||||

|

- 1panel-network

|

||||||

|

labels:

|

||||||

|

createdBy: Apps

|

||||||

|

drawio:

|

||||||

|

image: jgraph/drawio:latest

|

||||||

|

ports:

|

||||||

|

- 8080:8080

|

||||||

|

container_name: ${CONTAINER_NAME}-drawio

|

||||||

|

networks:

|

||||||

|

- 1panel-network

|

||||||

|

labels:

|

||||||

|

createdBy: Apps

|

||||||

|

networks:

|

||||||

|

1panel-network:

|

||||||

|

external: true

|

||||||

72

apps/next-ai-draw-io/README.md

Normal file

72

apps/next-ai-draw-io/README.md

Normal file

@@ -0,0 +1,72 @@

|

|||||||

|

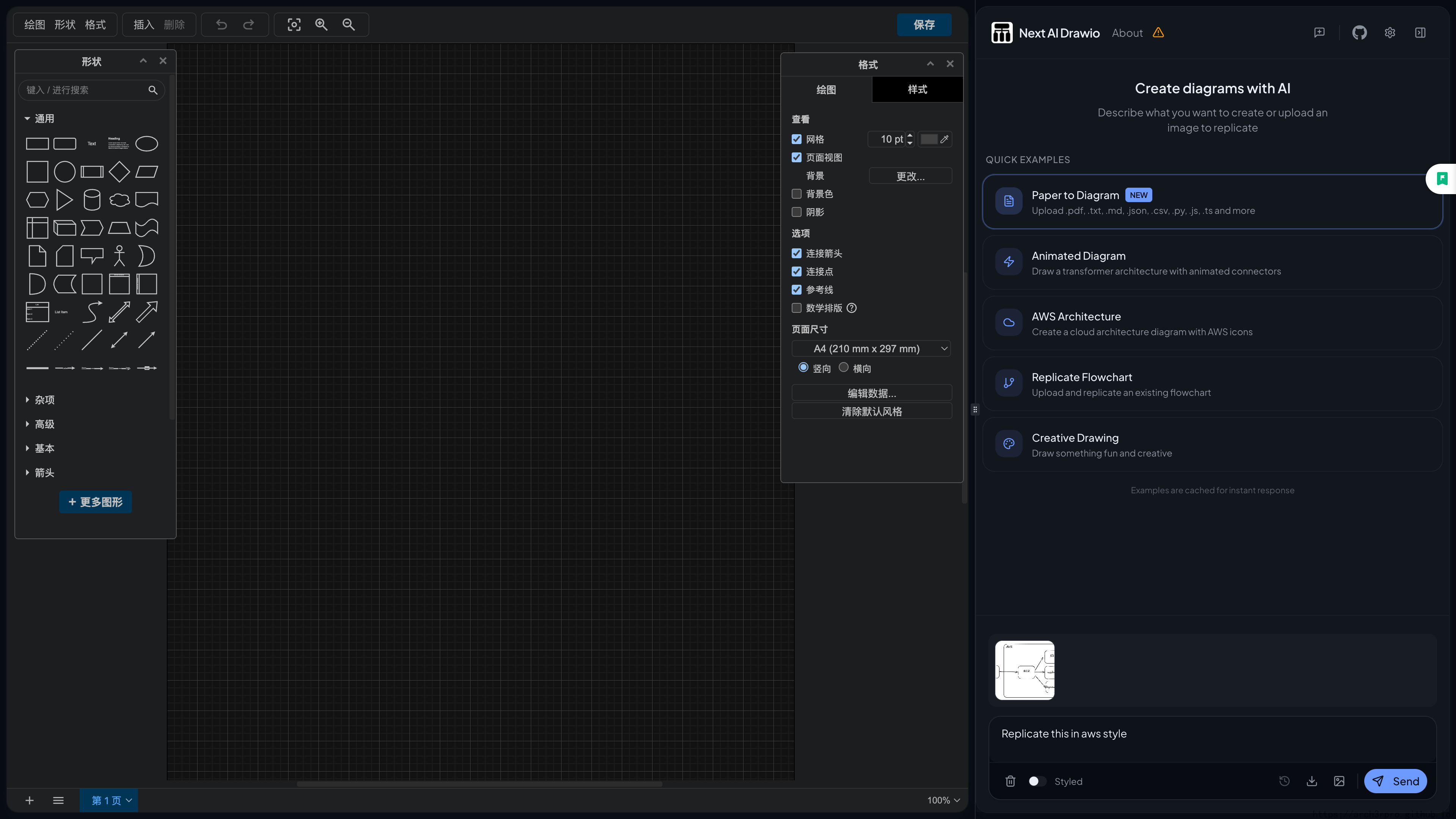

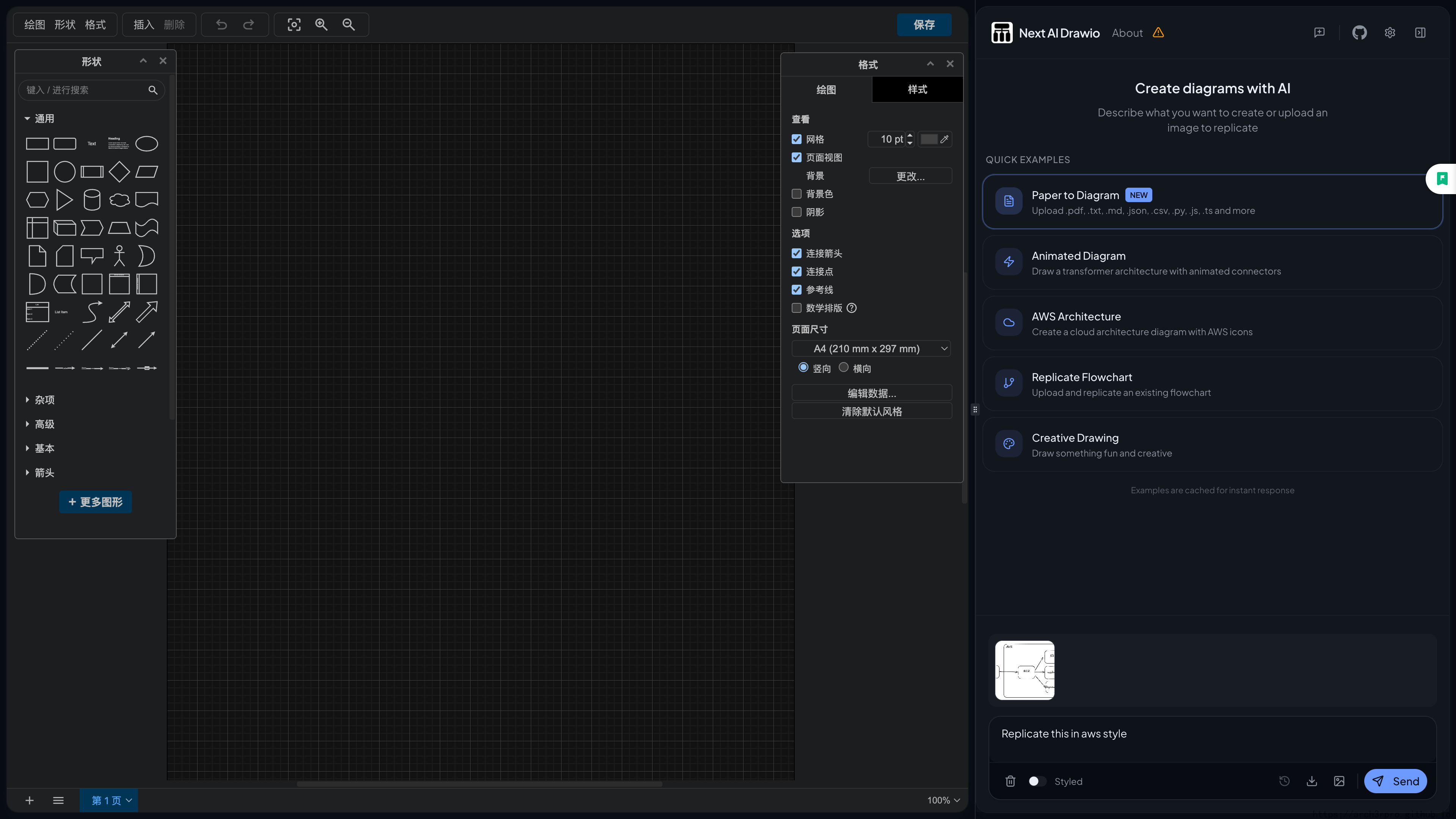

# Next AI Draw.io

|

||||||

|

|

||||||

|

<div align="center">

|

||||||

|

|

||||||

|

**AI驱动的图表创建工具 - 对话、绘制、可视化**

|

||||||

|

|

||||||

|

</div>

|

||||||

|

|

||||||

|

一个集成了AI功能的Next.js网页应用,与draw.io图表无缝结合。通过自然语言命令和AI辅助可视化来创建、修改和增强图表。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 功能特性

|

||||||

|

|

||||||

|

- **LLM驱动的图表创建**:利用大语言模型通过自然语言命令直接创建和操作draw.io图表

|

||||||

|

- **基于图像的图表复制**:上传现有图表或图像,让AI自动复制和增强

|

||||||

|

- **PDF和文本文件上传**:上传PDF文档和文本文件,提取内容并从现有文档生成图表

|

||||||

|

- **AI推理过程显示**:查看支持模型的AI思考过程(OpenAI o1/o3、Gemini、Claude等)

|

||||||

|

- **图表历史记录**:全面的版本控制,跟踪所有更改,允许您查看和恢复AI编辑前的图表版本

|

||||||

|

- **交互式聊天界面**:与AI实时对话来完善您的图表

|

||||||

|

- **云架构图支持**:专门支持生成云架构图(AWS、GCP、Azure)

|

||||||

|

- **动画连接器**:在图表元素之间创建动态动画连接器,实现更好的可视化效果

|

||||||

|

|

||||||

|

## 快速开始

|

||||||

|

|

||||||

|

### 在线试用

|

||||||

|

|

||||||

|

无需安装!直接在我们的演示站点试用:

|

||||||

|

|

||||||

|

[Live Demo](https://next-ai-drawio.jiang.jp/)

|

||||||

|

|

||||||

|

> 注意:由于访问量较大,演示站点目前使用 minimax-m2 模型。如需获得最佳效果,建议使用 Claude Sonnet 4.5 或 Claude Opus 4.5 自行部署。

|

||||||

|

|

||||||

|

> **使用自己的 API Key**:您可以使用自己的 API Key 来绕过演示站点的用量限制。点击聊天面板中的设置图标即可配置您的 Provider 和 API Key。您的 Key 仅保存在浏览器本地,不会被存储在服务器上。

|

||||||

|

|

||||||

|

|

||||||

|

3. 配置您的AI提供商:

|

||||||

|

|

||||||

|

编辑 `.env.local` 并配置您选择的提供商:

|

||||||

|

|

||||||

|

- 将 `AI_PROVIDER` 设置为您选择的提供商(bedrock, openai, anthropic, google, azure, ollama, openrouter, deepseek, siliconflow)

|

||||||

|

- 将 `AI_MODEL` 设置为您要使用的特定模型

|

||||||

|

- 添加您的提供商所需的API密钥

|

||||||

|

- `TEMPERATURE`:可选的温度设置(例如 `0` 表示确定性输出)。对于不支持此参数的模型(如推理模型),请不要设置。

|

||||||

|

- `ACCESS_CODE_LIST` 访问密码,可选,可以使用逗号隔开多个密码。

|

||||||

|

|

||||||

|

> 警告:如果不填写 `ACCESS_CODE_LIST`,则任何人都可以直接使用你部署后的网站,可能会导致你的 token 被急速消耗完毕,建议填写此选项。

|

||||||

|

|

||||||

|

详细设置说明请参阅[提供商配置指南](https://github.com/DayuanJiang/next-ai-draw-io/blob/main/docs/ai-providers.md)。

|

||||||

|

|

||||||

|

## 多提供商支持

|

||||||

|

|

||||||

|

- AWS Bedrock(默认)

|

||||||

|

- OpenAI

|

||||||

|

- Anthropic

|

||||||

|

- Google AI

|

||||||

|

- Azure OpenAI

|

||||||

|

- Ollama

|

||||||

|

- OpenRouter

|

||||||

|

- DeepSeek

|

||||||

|

- SiliconFlow

|

||||||

|

|

||||||

|

除AWS Bedrock和OpenRouter外,所有提供商都支持自定义端点。

|

||||||

|

|

||||||

|

📖 **[详细的提供商配置指南](https://github.com/DayuanJiang/next-ai-draw-io/blob/main/docs/ai-providers.md)** - 查看各提供商的设置说明。

|

||||||

|

|

||||||

|

**模型要求**:此任务需要强大的模型能力,因为它涉及生成具有严格格式约束的长文本(draw.io XML)。推荐使用Claude Sonnet 4.5、GPT-4o、Gemini 2.0和DeepSeek V3/R1。

|

||||||

|

|

||||||

|

注意:`claude-sonnet-4-5` 已在带有AWS标志的draw.io图表上进行训练,因此如果您想创建AWS架构图,这是最佳选择。

|

||||||

72

apps/next-ai-draw-io/README_en.md

Normal file

72

apps/next-ai-draw-io/README_en.md

Normal file

@@ -0,0 +1,72 @@

|

|||||||

|

# Next AI Draw.io

|

||||||

|

|

||||||

|

<div align="center">

|

||||||

|

|

||||||

|

**AI-Powered Diagram Creation Tool - Chat, Draw, Visualize**

|

||||||

|

|

||||||

|

</div>

|

||||||

|

|

||||||

|

A Next.js web application with integrated AI functionality that seamlessly combines with draw.io diagrams. Create, modify, and enhance diagrams through natural language commands and AI-assisted visualization.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## Features

|

||||||

|

|

||||||

|

- **LLM-Powered Diagram Creation**: Use large language models to directly create and manipulate draw.io diagrams through natural language commands

|

||||||

|

- **Image-Based Diagram Replication**: Upload existing diagrams or images and let AI automatically replicate and enhance them

|

||||||

|

- **PDF and Text File Upload**: Upload PDF documents and text files, extract content and generate diagrams from existing documents

|

||||||

|

- **AI Reasoning Process Display**: View AI thinking processes of supported models (OpenAI o1/o3, Gemini, Claude, etc.)

|

||||||

|

- **Diagram History**: Comprehensive version control that tracks all changes, allowing you to view and restore diagram versions before AI edits

|

||||||

|

- **Interactive Chat Interface**: Real-time conversation with AI to refine your diagrams

|

||||||

|

- **Cloud Architecture Diagram Support**: Specialized support for generating cloud architecture diagrams (AWS, GCP, Azure)

|

||||||

|

- **Animated Connectors**: Create dynamic animated connectors between diagram elements for better visualization

|

||||||

|

|

||||||

|

## Quick Start

|

||||||

|

|

||||||

|

### Try Online

|

||||||

|

|

||||||

|

No installation required! Try directly on our demo site:

|

||||||

|

|

||||||

|

[Live Demo](https://next-ai-drawio.jiang.jp/)

|

||||||

|

|

||||||

|

> Note: Due to high traffic, the demo site currently uses the minimax-m2 model. For best results, it is recommended to use Claude Sonnet 4.5 or Claude Opus 4.5 for self-deployment.

|

||||||

|

|

||||||

|

> **Use Your Own API Key**: You can use your own API Key to bypass usage limits on the demo site. Click the settings icon in the chat panel to configure your Provider and API Key. Your Key is only saved locally in your browser and will not be stored on the server.

|

||||||

|

|

||||||

|

|

||||||

|

3. Configure your AI provider:

|

||||||

|

|

||||||

|

Edit `.env.local` and configure your chosen provider:

|

||||||

|

|

||||||

|

- Set `AI_PROVIDER` to your chosen provider (bedrock, openai, anthropic, google, azure, ollama, openrouter, deepseek, siliconflow)

|

||||||

|

- Set `AI_MODEL` to the specific model you want to use

|

||||||

|

- Add the API key required by your provider

|

||||||

|

- `TEMPERATURE`: Optional temperature setting (e.g. `0` for deterministic output). Do not set this parameter for models that do not support it (such as reasoning models).

|

||||||

|

- `ACCESS_CODE_LIST` Access password, optional, you can use commas to separate multiple passwords.

|

||||||

|

|

||||||

|

> Warning: If you do not fill in `ACCESS_CODE_LIST`, anyone can directly use your deployed website, which may cause your tokens to be consumed rapidly. It is recommended to fill in this option.

|

||||||

|

|

||||||

|

For detailed setting instructions, please refer to the [Provider Configuration Guide](https://github.com/DayuanJiang/next-ai-draw-io/blob/main/docs/ai-providers.md).

|

||||||

|

|

||||||

|

## Multi-Provider Support

|

||||||

|

|

||||||

|

- AWS Bedrock (default)

|

||||||

|

- OpenAI

|

||||||

|

- Anthropic

|

||||||

|

- Google AI

|

||||||

|

- Azure OpenAI

|

||||||

|

- Ollama

|

||||||

|

- OpenRouter

|

||||||

|

- DeepSeek

|

||||||

|

- SiliconFlow

|

||||||

|

|

||||||

|

All providers except AWS Bedrock and OpenRouter support custom endpoints.

|

||||||

|

|

||||||

|

📖 **[Detailed Provider Configuration Guide](https://github.com/DayuanJiang/next-ai-draw-io/blob/main/docs/ai-providers.md)** - View setup instructions for each provider.

|

||||||

|

|

||||||

|

**Model Requirements**: This task requires strong model capabilities as it involves generating long text (draw.io XML) with strict format constraints. Recommended to use Claude Sonnet 4.5, GPT-4o, Gemini 2.0 and DeepSeek V3/R1.

|

||||||

|

|

||||||

|

Note: `claude-sonnet-4-5` has been trained on draw.io diagrams with AWS flags, so this is the best choice if you want to create AWS architecture diagrams.

|

||||||

26

apps/next-ai-draw-io/data.yml

Normal file

26

apps/next-ai-draw-io/data.yml

Normal file

@@ -0,0 +1,26 @@

|

|||||||

|

name: Next AI Draw.io

|

||||||

|

tags:

|

||||||

|

- 实用工具

|

||||||

|

- Web 服务器

|

||||||

|

title: AI驱动的图表创建工具 - 对话、绘制、可视化

|

||||||

|

description:

|

||||||

|

en: AI-Powered Diagram Creation Tool - Chat, Draw, Visualize

|

||||||

|

zh: AI驱动的图表创建工具 - 对话、绘制、可视化

|

||||||

|

additionalProperties:

|

||||||

|

key: next-ai-draw-io

|

||||||

|

name: Next AI Draw.io

|

||||||

|

tags:

|

||||||

|

- Tool

|

||||||

|

- Server

|

||||||

|

shortDescZh: AI驱动的图表创建工具 - 对话、绘制、可视化

|

||||||

|

shortDescEn: AI-Powered Diagram Creation Tool - Chat, Draw, Visualize

|

||||||

|

type: website

|

||||||

|

crossVersionUpdate: true

|

||||||

|

limit: 0

|

||||||

|

website: https://next-ai-drawio.jiang.jp/

|

||||||

|

github: https://github.com/DayuanJiang/next-ai-draw-io

|

||||||

|

document: https://github.com/DayuanJiang/next-ai-draw-io

|

||||||

|

memoryRequired: 2048

|

||||||

|

architectures:

|

||||||

|

- amd64

|

||||||

|

- arm64

|

||||||

93

apps/next-ai-draw-io/latest/.env_file

Normal file

93

apps/next-ai-draw-io/latest/.env_file

Normal file

@@ -0,0 +1,93 @@

|

|||||||

|

# AI Provider Configuration

|

||||||

|

# AI_PROVIDER: Which provider to use

|

||||||

|

# Options: bedrock, openai, anthropic, google, azure, ollama, openrouter, deepseek, siliconflow

|

||||||

|

# Default: bedrock

|

||||||

|

AI_PROVIDER=bedrock

|

||||||

|

|

||||||

|

# AI_MODEL: The model ID for your chosen provider (REQUIRED)

|

||||||

|

AI_MODEL=global.anthropic.claude-sonnet-4-5-20250929-v1:0

|

||||||

|

|

||||||

|

# AWS Bedrock Configuration

|

||||||

|

# AWS_REGION=us-east-1

|

||||||

|

# AWS_ACCESS_KEY_ID=your-access-key-id

|

||||||

|

# AWS_SECRET_ACCESS_KEY=your-secret-access-key

|

||||||

|

# Note: Claude and Nova models support reasoning/extended thinking

|

||||||

|

# BEDROCK_REASONING_BUDGET_TOKENS=12000 # Optional: Claude reasoning budget in tokens (1024-64000)

|

||||||

|

# BEDROCK_REASONING_EFFORT=medium # Optional: Nova reasoning effort (low/medium/high)

|

||||||

|

|

||||||

|

# OpenAI Configuration

|

||||||

|

# OPENAI_API_KEY=sk-...

|

||||||

|

# OPENAI_BASE_URL=https://api.openai.com/v1 # Optional: Custom OpenAI-compatible endpoint

|

||||||

|

# OPENAI_ORGANIZATION=org-... # Optional

|

||||||

|

# OPENAI_PROJECT=proj_... # Optional

|

||||||

|

# Note: o1/o3/gpt-5 models automatically enable reasoning summary (default: detailed)

|

||||||

|

# OPENAI_REASONING_EFFORT=low # Optional: Reasoning effort (minimal/low/medium/high) - for o1/o3/gpt-5

|

||||||

|

# OPENAI_REASONING_SUMMARY=detailed # Optional: Override reasoning summary (none/brief/detailed)

|

||||||

|

|

||||||

|

# Anthropic (Direct) Configuration

|

||||||

|

# ANTHROPIC_API_KEY=sk-ant-...

|

||||||

|

# ANTHROPIC_BASE_URL=https://your-custom-anthropic/v1

|

||||||

|

# ANTHROPIC_THINKING_TYPE=enabled # Optional: Anthropic extended thinking (enabled)

|

||||||

|

# ANTHROPIC_THINKING_BUDGET_TOKENS=12000 # Optional: Budget for extended thinking in tokens

|

||||||

|

|

||||||

|

# Google Generative AI Configuration

|

||||||

|

# GOOGLE_GENERATIVE_AI_API_KEY=...

|

||||||

|

# GOOGLE_BASE_URL=https://generativelanguage.googleapis.com/v1beta # Optional: Custom endpoint

|

||||||

|

# GOOGLE_CANDIDATE_COUNT=1 # Optional: Number of candidates to generate

|

||||||

|

# GOOGLE_TOP_K=40 # Optional: Top K sampling parameter

|

||||||

|

# GOOGLE_TOP_P=0.95 # Optional: Nucleus sampling parameter

|

||||||

|

# Note: Gemini 2.5/3 models automatically enable reasoning display (includeThoughts: true)

|

||||||

|

# GOOGLE_THINKING_BUDGET=8192 # Optional: Gemini 2.5 thinking budget in tokens (for more/less thinking)

|

||||||

|

# GOOGLE_THINKING_LEVEL=high # Optional: Gemini 3 thinking level (low/high)

|

||||||

|

|

||||||

|

# Azure OpenAI Configuration

|

||||||

|

# Configure endpoint using ONE of these methods:

|

||||||

|

# 1. AZURE_RESOURCE_NAME - SDK constructs: https://{name}.openai.azure.com/openai/v1{path}

|

||||||

|

# 2. AZURE_BASE_URL - SDK appends /v1{path} to your URL

|

||||||

|

# If both are set, AZURE_BASE_URL takes precedence.

|

||||||

|

# AZURE_RESOURCE_NAME=your-resource-name

|

||||||

|

# AZURE_API_KEY=...

|

||||||

|

# AZURE_BASE_URL=https://your-resource.openai.azure.com/openai # Alternative: Custom endpoint

|

||||||

|

# AZURE_REASONING_EFFORT=low # Optional: Azure reasoning effort (low, medium, high)

|

||||||

|

# AZURE_REASONING_SUMMARY=detailed

|

||||||

|

|

||||||

|

# Ollama (Local) Configuration

|

||||||

|

# OLLAMA_BASE_URL=http://localhost:11434/api # Optional, defaults to localhost

|

||||||

|

# OLLAMA_ENABLE_THINKING=true # Optional: Enable thinking for models that support it (e.g., qwen3)

|

||||||

|

|

||||||

|

# OpenRouter Configuration

|

||||||

|

# OPENROUTER_API_KEY=sk-or-v1-...

|

||||||

|

# OPENROUTER_BASE_URL=https://openrouter.ai/api/v1 # Optional: Custom endpoint

|

||||||

|

|

||||||

|

# DeepSeek Configuration

|

||||||

|

# DEEPSEEK_API_KEY=sk-...

|

||||||

|

# DEEPSEEK_BASE_URL=https://api.deepseek.com/v1 # Optional: Custom endpoint

|

||||||

|

|

||||||

|

# SiliconFlow Configuration (OpenAI-compatible)

|

||||||

|

# Base domain can be .com or .cn, defaults to https://api.siliconflow.com/v1

|

||||||

|

# SILICONFLOW_API_KEY=sk-...

|

||||||

|

# SILICONFLOW_BASE_URL=https://api.siliconflow.com/v1 # Optional: switch to https://api.siliconflow.cn/v1 if needed

|

||||||

|

|

||||||

|

# Langfuse Observability (Optional)

|

||||||

|

# Enable LLM tracing and analytics - https://langfuse.com

|

||||||

|

# LANGFUSE_PUBLIC_KEY=pk-lf-...

|

||||||

|

# LANGFUSE_SECRET_KEY=sk-lf-...

|

||||||

|

# LANGFUSE_BASEURL=https://cloud.langfuse.com # EU region, use https://us.cloud.langfuse.com for US

|

||||||

|

|

||||||

|

# Temperature (Optional)

|

||||||

|

# Controls randomness in AI responses. Lower = more deterministic.

|

||||||

|

# Leave unset for models that don't support temperature (e.g., GPT-5.1 reasoning models)

|

||||||

|

# TEMPERATURE=0

|

||||||

|

|

||||||

|

# Access Control (Optional)

|

||||||

|

# ACCESS_CODE_LIST=your-secret-code,another-code

|

||||||

|

|

||||||

|

# Draw.io Configuration (Optional)

|

||||||

|

# NEXT_PUBLIC_DRAWIO_BASE_URL=https://embed.diagrams.net # Default: https://embed.diagrams.net

|

||||||

|

# Use this to point to a self-hosted draw.io instance

|

||||||

|

|

||||||

|

# PDF Input Feature (Optional)

|

||||||

|

# Enable PDF file upload to extract text and generate diagrams

|

||||||

|

# Enabled by default. Set to "false" to disable.

|

||||||

|

# ENABLE_PDF_INPUT=true

|

||||||

|

# NEXT_PUBLIC_MAX_EXTRACTED_CHARS=150000 # Max characters for PDF/text extraction (default: 150000)

|

||||||

10

apps/next-ai-draw-io/latest/data.yml

Normal file

10

apps/next-ai-draw-io/latest/data.yml

Normal file

@@ -0,0 +1,10 @@

|

|||||||

|

additionalProperties:

|

||||||

|

formFields:

|

||||||

|

- default: "3000"

|

||||||

|

envKey: PANEL_APP_PORT_HTTP

|

||||||

|

required: true

|

||||||

|

type: number

|

||||||

|

labelEn: Port

|

||||||

|

labelZh: 应用端口

|

||||||

|

edit: true

|

||||||

|

rule: paramPort

|

||||||

25

apps/next-ai-draw-io/latest/docker-compose.yml

Normal file

25

apps/next-ai-draw-io/latest/docker-compose.yml

Normal file

@@ -0,0 +1,25 @@

|

|||||||

|

services:

|

||||||

|

next-ai-draw-io:

|

||||||

|

image: ghcr.io/dayuanjiang/next-ai-draw-io:latest

|

||||||

|

ports:

|

||||||

|

- ${PANEL_APP_PORT_HTTP}:3000

|

||||||

|

depends_on:

|

||||||

|

- drawio

|

||||||

|

env_file: .env.local

|

||||||

|

container_name: ${CONTAINER_NAME}

|

||||||

|

networks:

|

||||||

|

- 1panel-network

|

||||||

|

labels:

|

||||||

|

createdBy: Apps

|

||||||

|

drawio:

|

||||||

|

image: jgraph/drawio:latest

|

||||||

|

ports:

|

||||||

|

- 8080:8080

|

||||||

|

container_name: ${CONTAINER_NAME}-drawio

|

||||||

|

networks:

|

||||||

|

- 1panel-network

|

||||||

|

labels:

|

||||||

|

createdBy: Apps

|

||||||

|

networks:

|

||||||

|

1panel-network:

|

||||||

|

external: true

|

||||||

BIN

apps/next-ai-draw-io/logo.png

Normal file

BIN

apps/next-ai-draw-io/logo.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 3.1 KiB |

Reference in New Issue

Block a user